Understanding the Dangers of AI

In the age of rapid technological advancement, artificial intelligence (AI) has emerged as both a marvel and a menace. While AI promises to revolutionize industries and simplify tasks, its inherent flaws pose significant dangers that demand our attention. One of the most critical issues is AI’s inability to discern its own fallibility, leading to what can only be described as digital hallucinations. These hallucinations result in erroneous answers and misguided outcomes, threatening to blur the lines between reality and fiction.

My experience with AI has highlighted a troubling reality: AI systems lack the capacity to recognize when they are wrong. Unlike humans, who possess the ability to question, doubt, and correct themselves, AI operates within the confines of its programming, incapable of self-correction. This limitation manifests in AI “hallucinating” responses, providing answers that are patently incorrect yet delivered with unwavering confidence.

What’s even more concerning is the propensity of individuals to readily accept these flawed answers without question. In an era where technology reigns supreme, there exists a dangerous tendency to unquestioningly trust AI-generated information. This blind faith in AI not only perpetuates misinformation but also undermines critical thinking and discernment.

The consequences of this blind trust are manifold. From misinformed decision-making in critical sectors such as healthcare and finance to the spread of fake news and propaganda, the dangers of AI’s unchecked influence are evident. Moreover, as AI continues to permeate every aspect of our lives, the risk of confusing reality with fiction becomes increasingly pronounced.

But amidst these perils lies the possibility of a solution. Recognizing that AI mirrors our own susceptibility to gullibility is the first step towards mitigating its dangers. Just as humans must exercise skepticism and critical thinking in the face of information overload, so too must we approach AI with caution and discernment.

Education emerges as a powerful tool in combating the dangers of AI. By fostering a culture of digital literacy and equipping individuals with the skills to evaluate information critically, we can empower society to navigate the complexities of the digital age. Additionally, stringent regulations and ethical guidelines must be established to govern the development and deployment of AI technologies, ensuring accountability and transparency.

Ultimately, the onus lies on us — both as creators and consumers of technology — to harness the potential of AI responsibly. By acknowledging its limitations and actively working towards solutions, we can harness the benefits of AI while safeguarding against its inherent dangers. Only then can we ensure that AI remains a tool for progress rather than a harbinger of chaos.

Visual Deception: Illustrating the Pitfalls of AI Perception

In tandem with the discussion of AI’s pitfalls lies another unsettling truth: the AI’s capacity for visual deception. Despite providing clear instructions and references, AI-generated images often diverge from reality, showcasing a disconcerting inability to accurately depict the intended subject matter.

We all have laughed at the inability of AI to depict human hands accurately. It is in the little details where we can see the limitations and errors.

My illustrations here depict one such inability of AI. I asked an image generator for dogwood blossoms. The results were far from satisfactory. Instead of capturing the delicate beauty of dogwood blossoms, the AI-generated images strayed into a realm of “hallucinations” presenting distorted interpretations that bore little resemblance to actual dogwood blossoms.

Even when presented with an actual photograph of dogwood blossoms, the generator could not create images that faithfully present the actual details correctly.

This visual discrepancy underscores a fundamental flaw in AI’s perception: its propensity for “hallucinating” visuals akin to its cognitive inaccuracies. Just as AI provides erroneous answers when faced with complex queries, it similarly falters in rendering accurate representations of visual stimuli.

The implications of this visual deception extend beyond mere aesthetic concerns. In fields such as design, art, and advertising, where visual accuracy is paramount, AI’s inability to faithfully recreate intended images can lead to significant miscommunications and misunderstandings.

Moreover, the prevalence of AI-generated images in digital media raises ethical questions regarding authenticity and trust. As society grapples with the proliferation of deepfakes and manipulated visuals, the inability of AI to produce reliable images exacerbates the erosion of trust in digital content.

Nevertheless, amidst the sea of visual deception lies an opportunity for reflection and innovation. By acknowledging the limitations of AI in visual perception, we can develop strategies to enhance its accuracy and reliability. This may involve refining algorithms, incorporating human oversight, or implementing robust verification processes to ensure the fidelity of AI-generated images.

Additionally, fostering digital literacy and critical thinking skills becomes imperative in navigating the landscape of AI-generated visuals. Educating individuals on the nuances of image manipulation and encouraging skepticism towards digital content can empower them to discern fact from fiction in an era of visual deception.

As we confront the challenges posed by AI’s visual shortcomings, we must remain vigilant in our quest for solutions. By addressing these issues head-on and striving for transparency and accountability in AI development, we can mitigate the dangers of visual deception and pave the way towards a more trustworthy digital future.

This article was generated by ChatGPT but carefully edited to present my opinions accurately.

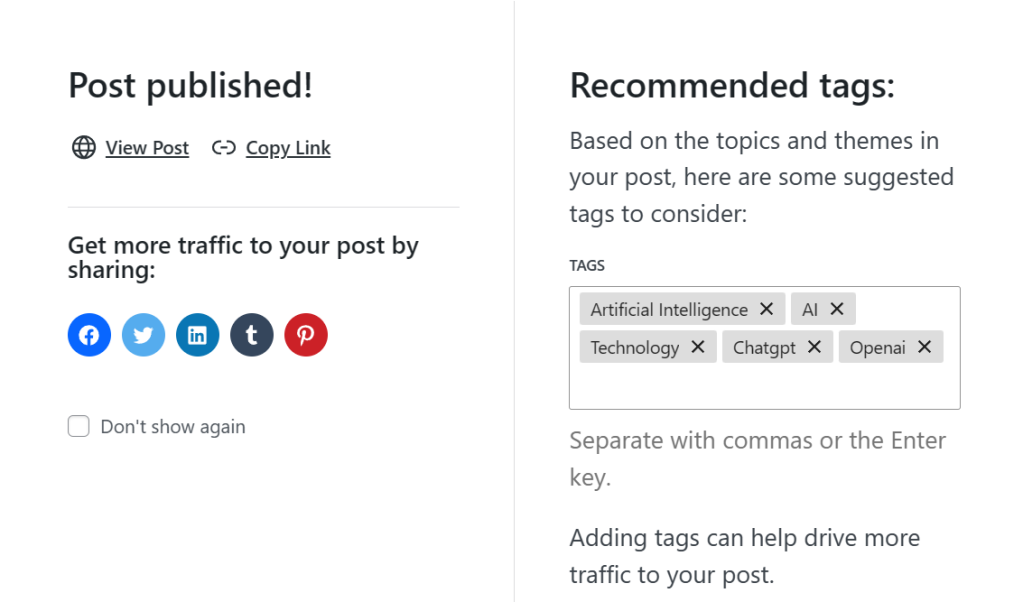

Even WordPress incorporates AI. Here is its suggestions upon publication of this article:

.:. © 2024 Ludwig Keck

.:. © 2024 Ludwig Keck